Most teams do not have an AI problem. They have a thinking-continuity problem.

The work starts in one place, moves to another, gets summarized somewhere else, and eventually becomes a decision no one can fully reconstruct. The important context is scattered across chat threads, meeting notes, documents, tasks, and memory.

Then AI gets added on top.

If AI is only used as a private prompt box, it can make individuals faster while making team context even more fragmented. One person generates a draft. Another person generates a different version. A third person asks the same question again. The work accelerates, but the shared understanding does not.

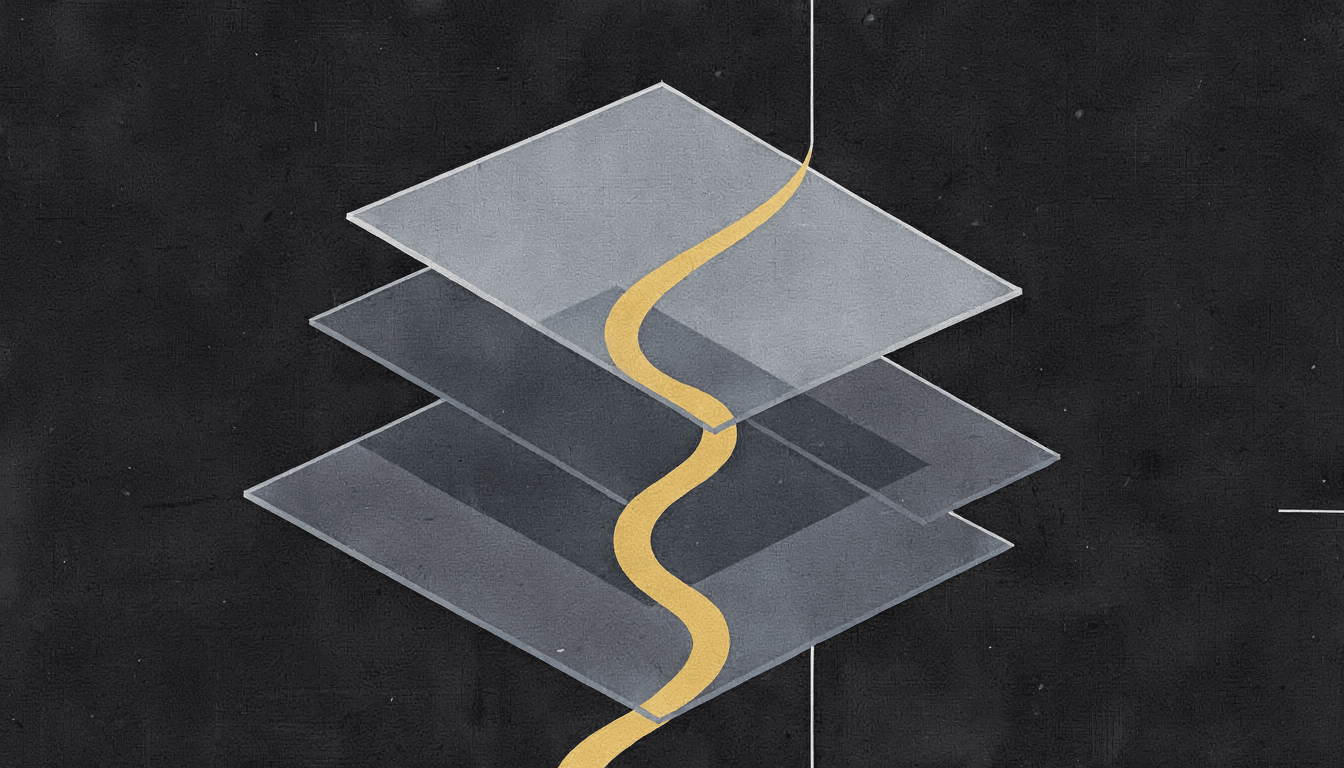

That is why I think teams need a thinking stack.

Not a complicated methodology. Not another management framework. A simple way to describe how people and AI move from messy context to durable progress.

What Is a Thinking Stack?

A thinking stack is the set of layers that help a team turn raw context into clear work.

In practice, it answers six questions:

- What context are we working from?

- What question are we actually trying to answer?

- What options or interpretations are on the table?

- What does human judgment accept, reject, or change?

- What artifact should survive the conversation?

- What follow-through is allowed, and what needs approval?

When those layers are explicit, AI becomes much more useful. It is no longer just producing text. It is helping the team preserve context, expose tradeoffs, and move from conversation to artifact.

Layer One: Shared Context

AI collaboration starts with context, but not just any context.

A useful system needs to know what room it is in. Who is involved? What is the goal? What has already been tried? What is sensitive? Which documents matter? What decisions are already settled?

This is where many AI workflows fail. People keep feeding the model fragments of the work and expecting it to behave like it understands the whole room.

It does not.

If the context is private, partial, or stale, the output will look confident while missing the point. A strong thinking stack begins by making the working context visible and shared.

The practical test:

Could someone return to this work next week and understand what the team knew at the time?

If not, the stack is already weak.

Layer Two: The Real Question

Teams often ask AI for outputs before they have framed the question.

"Write the brief."

"Summarize the research."

"Make this better."

Those are tasks, not questions.

A better thinking stack forces the team to name what is actually being decided. Are we choosing an ICP? Stress-testing a launch promise? Comparing product paths? Turning a customer conversation into follow-up? Deciding what not to build?

AI can help here, but only if it is allowed to challenge the frame.

One of the most valuable AI contributions is not an answer. It is:

"I think you are asking three different questions at once."

That is a useful collaborator.

Layer Three: Options, Not Answers

The fastest way to misuse AI is to treat the first plausible response as the answer.

Good team thinking usually needs options. It needs the strong case, the weak case, the counterargument, the overlooked risk, and the boring practical path.

AI is useful because it can generate and compare those possibilities quickly. But the goal is not volume. The goal is better contrast.

For example:

- What is the bold version?

- What is the conservative version?

- What would a customer object to?

- What would an investor misunderstand?

- What would break operationally?

- What is the simplest version that still proves the point?

This is where AI can make a room sharper. Not by replacing judgment, but by giving judgment better material to work with.

Layer Four: Human Judgment

The human layer is not a ceremonial approval step.

It is where taste, accountability, ethics, timing, and lived context enter the system.

AI can identify patterns. It can draft. It can synthesize. It can compare. But it does not carry the cost of the decision. People do.

That means human judgment has to be designed into the stack. The system should make it easy to mark what is accepted, what is rejected, what is unresolved, and why.

This matters because the "why" is often more valuable than the output.

A decision brief without rationale is fragile. A launch plan without tradeoffs is shallow. A strategy memo without rejected alternatives is hard to trust.

The thinking stack should preserve the judgment, not just the final text.

Layer Five: Durable Artifacts

Conversation is not enough.

A good collaboration system turns conversation into artifacts that survive:

- Decision briefs.

- Research summaries.

- Product specs.

- Execution packs.

- Follow-up plans.

- Customer notes.

- Open questions.

- Risk registers.

The artifact is where shared intelligence becomes useful. It gives the team something to inspect, improve, reuse, and hand off.

This is also where many AI workflows lose value. The work happens in a chat, but the useful output has to be manually copied, cleaned, and rebuilt somewhere else.

That is a tax.

The stronger pattern is for AI to participate in the creation of the artifact while keeping the reasoning visible.

Layer Six: Bounded Follow-Through

The final layer is action.

Not full autonomy. Not "let the agent run the company." Bounded follow-through.

AI should be able to prepare the next steps. It might draft the email, assemble the task list, prepare the meeting agenda, summarize the decision, or propose the execution pack.

But the system needs to distinguish between:

- Suggested action.

- Prepared action.

- Approved action.

- Completed action.

That distinction is not bureaucracy. It is trust design.

If teams cannot see where AI suggestion ends and human approval begins, they will either underuse the system or stop trusting it.

The Stack In One Example

Imagine a team deciding whether to launch an Early Access pilot with a specific customer.

A weak AI workflow asks:

"Write a pilot plan."

A stronger thinking stack works differently.

First, it gathers the room context: customer notes, product constraints, current launch promise, open risks, and desired proof.

Then it frames the real question:

"What is the smallest pilot that proves value without widening the product promise?"

Then it generates options:

- A narrow decision-brief pilot.

- A broader workflow pilot.

- A technical integration pilot.

Then the team applies judgment:

"The broad workflow pilot is attractive but too risky. The integration pilot is premature. The decision-brief pilot matches launch truth."

Then the system produces a durable artifact:

- Pilot objective.

- Success criteria.

- Demo-safe action.

- Boundaries.

- Open questions.

- Follow-up plan.

Finally, AI prepares bounded follow-through:

- Draft email for review.

- Internal execution checklist.

- Pilot kickoff agenda.

That is not just prompting. That is shared work.

Why This Matters

The future of AI collaboration will not be won by teams that generate the most content. It will be won by teams that preserve the best context.

The thinking stack is a way to keep that context alive.

It gives AI a better role. It gives humans better leverage. It gives teams a way to move faster without losing the reasoning behind the work.

That is the point of shared intelligence: not more isolated outputs, but better collective thinking that turns into durable progress.